Netsparker’s Weekly Security Roundup 2017 – Week 51

A weekly security roundup by Netsparker for week 51 of 2017 – We examine the differences between the latest version of OWASP Top 10 and its predecessor. We also delve into the Mailspoilt vulnerability, security issues with EV Certificates and Google’s .dev Support.

Your Information will be kept private.

Begin your DAST-first AppSec journey today.

Request a demoFinally – OWASP Top 10 2017!

Although, the OWASP Top 10 vulnerability list is not a mandatory web security standards document, it plays a significant role in the cyber-security sector, not least because it is compiled based on data collected by the web security community, and has set the agenda since its first publication in 2004.

A full four years since the last list (2013), OWASP has finally published its up-to-date Top 10 vulnerability list. It overcame much initial opposition, some controversial items were removed and some were revised during the preparation process.

A preliminary, contentious draft was first published in April 2017. OWASP proposed the inclusion of A7: Insufficient Attack Protection, which many felt included a not terribly well-disguised reference to Contrasts Security, a company who recommended the item in the list and that develops a Web Application Firewall (WAF) product. Naturally, this was met with opposition. After some changes, it was incorporated into another article and ranked 10th place as Insufficient Logging and Monitoring. There was also an entry for API Security in the first draft. However, it didn’t make it into the final version.

The final 2017 list has a lot of similarities when compared to the Top 10 – 2013. However, some vulnerabilities that OWASP removed in the current version – such as open redirection and cross-site request forgery (CSRF), the latter of which was called ‘the sleeping giant’ in OWASP documents – are known to have a huge impact on the security of web applications.

These are, by far, not the only changes to the new list:

- Insecure Direct Object References (IDOR), which is amongst the vulnerabilities with the highest impact regarding mobile security, was merged with Missing Function Level Access Control and became the new A5: Broken Access Control

- Insufficient Logging & Monitoring, XML External Entity (XXE) and Insecure Deserialization were added in OWASP Top 10 2017 RC2 as brand new vulnerabilities.

Cross-site scripting (XSS) vulnerabilities have been downgraded from 3rd to 7th place in the 2017 list. This could have been influenced by new client-side security measures, such as Content Security Policy and XSS filters, that are built into most modern browsers.

This is a screenshot of the table contained in the Release Notes section of the 2017 publication, revealing what was removed, added or merged in comparison with the previous version (2013).

For a more detailed explanation of all the security flaws listed, in the list see OWASP Top 10 2017.

Mailsploit

From the 1990s to the 2000s, it was relatively easy for scammers to fake a sender’s email address. One of the main vulnerabilities at the time was facilitated by a series of bugs in email clients that enabled hackers to change the From: header. It was dubbed Mailspoilt by Sabri Haddouche, the Researcher who found it.

Though since the advent of Domain Message Authentication Reporting & Conformance (DMARC), that type of hack is getting much more difficult to accomplish. DMARC is enabled by setting a few specific values using Domain Name System (DNS) records: Sender Policy Framework (SPF) and DomainKeys Identified Mail (DKIM).

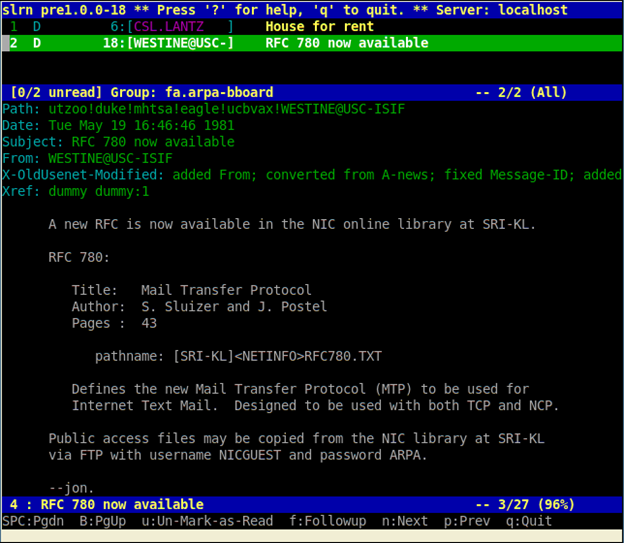

RFC 1342 Representation of Non-ASCII Text in Internet Message Headers

These new security measures are implemented by most, if not all, popular email services. But those precautions are ineffective if email services and clients display the wrong sender name to the user.

Shockingly, this is exactly what happened to thirty email services in 2017. The culprit was a detail in RFC 1342 Representation of Non-ASCII Text in Internet Message Headers, a Internet Activities Board (IAB) Official Protocol Standards document (memo) that described how the developers of email clients should handle non-ASCII text in the From: header.

This is how the vulnerability works. ASCII values are expected in all fields in an email message. The From: field is one of them. RFC 1342 contains an interesting detail – the Mailsploit vulnerability occurs due to the following explanation:

- A mail composer that implements this specification will provide a means of inputting non-ASCII text in header fields, but will translate these fields (or appropriate portions of these fields) into encoded-words before inserting them into the message header.

- A mail reader that implements this specification will recognize encoded-words when they appear in certain portions of the message header. Instead of displaying the encoded-word “as-is”, it will reverse the encoding and display the original text in the designated character set.

According to the RFC 1342, mail clients that want to adhere to the specification must be able to decode properly encoded non-ASCII characters within the header fields. It can done like this:

=?utf-8?b?[BASE-64]?=

=?utf-8?Q?[QUOTED-PRINTABLE]?=

Most email clients and email services overlooked a dangerous pitfall at this point – decoded values may contain dangerous characters such as null bytes and new lines. Adding these characters cuts off the domain part of the sender’s email address, making it possible to effectively change the sender address that the user sees to an arbitrary one.

RFC 1342 states:

- The client on iOS is vulnerable to a null-byte injection

- The client on macOS is vulnerable to an email(name) injection

A payload that combines both vectors works perfectly across all operating systems. Let’s examine a From header field:

From: =?utf-8?b?${base64_encode('potus@whitehouse.gov')}?==?utf-8?Q?=00?==?utf-8?b?${base64_encode('(potus@whitehouse.gov)')}?=@mailsploit.com

This is the payload after the encoding process:

From: =?utf-8?b?cG90dXNAd2hpdGVob3VzZS5nb3Y=?==?utf-8?Q?=00?==?utf-8?b?cG90dXNAd2hpdGVob3VzZS5nb3Y=?=@mailsploit.com

The above header field which is decoded by the Mail.app, becomes this one:

From: potus@whitehouse.gov\0(potus@whitehouse.gov)@mailsploit.com

The email’s sender would be displayed as potus@whitehouse.gov in both macOS and IOS. This is what is really going on:

- iOS will discard everything after the null-byte (the part after the \0 character)

- macOS ignores the null-byte, but will stop after the first valid email it sees (due to a bug in the parser)

Why Did Email Service Precautions Not Work?

While the email client mistakenly shows potus@whitehouse.gov, DKIM and SPF verifications come from the @mailsploit.com domain. Since this is the host where the mail is coming from, all checks are passed. This is because the bug is in the code that displays the sender’s address, but not in the code that checks the DMARC-related records.

Was it Simply Sender Address Spoofing?

Unfortunately not! It wasn’t simply that null bytes and new line characters could be added. Even encoded XSS payloads could have been injected into the From: section. After the decoding process, the unsanitized XSS payload would remain.

A series of services were affected by this kind of XSS attack in the From: header. For example both HushMail and Open Mailbox email services were happily running malicious HTML code as seen in the following video.

A mail header containing an encoded XSS payload may look similar to this one:

From: =?utf-8?b?c2VydmljZUBwYXlwYWwuY29tPGlmcmFtZSBvbmxvYWQ9YWxlcnQoZG9jdW1lbnQuY29va2llKSBzcmM9aHR0cHM6Ly93d3cuaHVzaG1haWwuY29tIHN0eWxlPSJkaXNwbGF5Om5vbmUi?==?utf-8?Q?=0A=00?=@mailsploit.com

The mail client will decode the From: header above to this payload:

From: service@paypal.com<iframe onload=alert(document.cookie) src=https://www.hushmail.com style="display:none"\n\0@mailsploit.com

For further information on the vulnerability, see Mailspoilt.

Extended Validation Certificate (EV) – A New Way of Phishing

Statistics show that the use of Secure Sockets Layer and Transport Layer Security (SSL/TLS) protocols is increasing by the day. However, SSL, at least, is a double-edged sword. It can have many security advantages but scammers that employ phishing websites often use certificates for hoodwinking their victims.

There are three type of certificates for websites. Domain Validation (DV), Organizational Validation (OV) and Extended Validation (EV). OV and DV certificates resemble each other. Depending on the browser, they usually display a green padlock to the left of the address bar. EV certificates display the green padlock too, but also the name of the corporation as well as country information. (Look at your browser’s address bar right now to see an example.)

Unfortunately there are some inconsistencies when it comes to displaying EV certificate information across browsers. For further technical details, see Scott Helme’s recent blog post, Are EV certificates worth the paper they’re written on?.

Security Research With EV Certificates

Even though getting EV certificates is tough, since you need to have a registered company, two Security Researchers have published their experience of how they were able to get EV certificates without problems, and how they could have used them for phishing attacks.

Exploiting Browsers’ EV Certificate Ownership Information Positioning

The first experiment was conducted by James Burton. He applied Symantec’s EV certificates for a company he registered, which he called ‘Identity Verified’. It turns out that registering a company and obtaining such a certificate only costs about £40. He also used a free, one-month plan from Symantec.

Why he chose this company name is pretty obvious. In the Safari browser on a site with an EV certificate, the company name overlaps the address bar in its entirety (see above). In this example, all the user sees in the address bar is the phrase ‘Identity Verified’.

This is what it would look like on a cellphone.

So, when an unsuspecting user sees this above a Google login page, the user naturally assumes that it’s Google’s identity that is verified. A phishing attempt using this technique will most likely be highly effective!

The situation is different in Firefox and Chrome. In Firefox, the website’s registered company name is displayed to the left of the address bar.

Chrome is similar. While you can also see the address bar, you could still use a phishing domain, incorporating a logo that matches the one used in the Identity Verified popup. This is much more difficult for a hacker to to achieve though, because they would most likely get detected while acquiring an EV certificate. Read all the details about this EV certificate experiment.

Gaming EV Ownership With Stripe

Ian Carroll was another Security Researcher who conducted a similar experiment. He used a slightly different approach to James Burton. The company name and country information are displayed on the address bar. The company he registered for the experiment was called ‘Stripe, Inc’. If that sounds familiar, it is probably due to the fact that there is a popular payment processing company with the same name!

So, when you visit the website he registered, https://stripe.ian.sh/, you will see ‘Stripe Inc US’ to the left of the address bar.

The website’s EV certificate belongs to Comodo. But, when you see ‘Stripe, Inc [US]’, you probably think of the real Stripe Inc (the payment processor from Delaware). However, this website is Ian’s company in Kentucky. Only the state is different. This confusion is easily caused because the US has very large states that operate, in some ways, like countries do elsewhere. This enables some disconnection when it comes to company names.

We’ve mentioned Safari’s behavior above. In this example, the other browsers fare no better! Chrome and Firefox will also display ‘Stripe, Inc [US]’ as the EV’s ownership. You can read more about the vulnerability on Ian’s website.

It is very difficult for the average user to spot this type of phishing site.

.dev Support From Google!

Google has been a central driving force behind the widespread adoption of Transport Layer Security (TLS). Since 2010, Google used HTTPS for Gmail, and it also reserved the better spots on its search results pages for websites that used SSL. Naturally, this strong stance helped to increase the popularity of SSL elsewhere. In addition, Google was the golden sponsor for Let’s Encrypt (even Netsparker sponsors Let’s Encrypt), which provides SSL certificates free of charge.

At the beginning of 2017, Google began warning users about websites with insecure HTTP connections, by displaying the ‘Not Secure’ notification. It was a giant leap forward that helped to push HTTPS and insecure connections into the spotlight, particularly for average users.

Then, Google crowned its efforts with a brand new move: they announced that they will include .dev Top Level Domains (TLDs) into their HSTS preload list. As a result of this, .dev domains need to be served over HTTPS. This move includes .foo domains.

If HTTPS is not enforced, a malicious attacker executing a man-in-the-middle (MITM) attack could serve an otherwise encrypted website over unencrypted HTTP. For this reason, it’s important to serve your website exclusively over a secure connection if the transmission of sensitive data is involved. It might also be the case that there is an invalid SSL certificate that the attacker issued for the attacked website. Users might just click through the warning prompt to get to the content they wanted to see. HSTS ensures that insecure connections with invalid certificates are forced over HTTPS encryption.

This sounds like a good thing. The problem is that HSTS is usually activated through an HTTP response header. So does that mean that an attacker could just remove the header from a plain HTTP response? Unfortunately that’s a very real threat! HSTS is based on the Trust on First Use (TOFU) methodology. This header has to be set upon the first connection. However, there is an easy way to enable HSTS for a site, even if it was never even visited by the browser. In Google Chrome this is done by adding a site to the so-called HSTS preload list. This is a hardcoded list in the source code of the Chrome browser. If a user wants to visit a site, Chrome will check whether or not the site is mentioned in this list. If it finds the site, it will enable HSTS, even if it was never previously visited by the browser.

Adding your website to the list requires a few steps. It also takes some time until the changes take effect, as the new list will only be distributed once a new chrome update is released. Since .dev and .foo domains are mostly used in a development environment, and are not public, it’s not possible to add them to the list. In fact the .dev top level domain is a valid TLD. However, Google is the owner of this extension and uses it for internal purposes only.

With its latest change, Google now forces developers to either enable HTTPS in their development environment or use one of the TLDs below. They are specifically recommended for development environments:

- .test

- .example

- .invalid

- .localhost

If you are interested in this topic, you can read Google’s blog post, Broadening HSTS to secure more of the Web.

Recon is Everywhere!

Recon is an abbreviation of the word ‘reconnaissance’. In our context, it refers to exploration in order to gather lots of information as efficiently as possible. According to some experts in the field, it is the most important step of every penetration test.

One of our select friends in web security, with the coolest job title ever, Attack Developer, Evren Yalçın, wrote Recon is Everywhere from his unique viewpoint. It includes some really helpful hints for penetration testing aficionados.

Company Logos

Have you ever wondered whether you have missed some web pages during the reconnaissance phase of your penetration testing? More often than not, pages exist that even their owners have forgotten. Long ago campaign pages, shareholders or products websites are just a few examples. You can find inactive company web pages using Google’s Reverse Image Search functionality. Simply upload your company logo and you could discover some forgotten websites.

Copyright

Another interesting trick is to conduct a search for the copyright text that is often found at the bottom of a company web page. For example, if you want to find pages belonging to example.com, you could type “© 2017 example.com” into Google.

Humans.TXT

The humans.txt file aims to provide website visitors with information on who participated in creating the application. Not only is it a great way to know who coded the website, but it’s a lamentably straightforward way for attackers to find related github pages, and gain more information about a company and its staff.

Reverse Analytics

Using Google to track what visitors are doing on your website, or gather statistics on how many people you can reach with your content, involves adding a piece of javascript code with a unique identifier to your site. This enables Google to distinguish between websites, and present you with the correct data for your page.

This is an example of the code snippet used.

The problem is that if you use the same tracking code across different websites, an attacker can easily find all different websites that use it. This also means that the attacker can find all the pages that you set up and therefore find outdated websites that you forgot about. There are two tools that simplify this process: http://www.gachecker.com/ and http://moonsearch.com/analytics/.