How Can I Ensure That The Web Vulnerability Scanner Scanned All My Website?

This FAQ looks into a few different methods which you can use to ensure that the coverage of the web application security scanner was good and scanned all of your websites and web applications.

Your Information will be kept private.

Begin your DAST-first AppSec journey today.

Request a demoThere are several ways how you can check and ensure that the web vulnerability scanners have automatically crawled and scanned all sections of your website, all of which are documented below.

Check the Sitemap

The Sitemap in both Netsparker Desktop and Netsparker Enterprise includes a list of all the objects (files, GET / POST parameters etc) that the scanner crawled and scan. Review the sitemap and check that all the components and sections of your website are listed in the sitemap.

Anything that is listed in the sitemap has been crawled and scanned by the web vulnerability scanner.

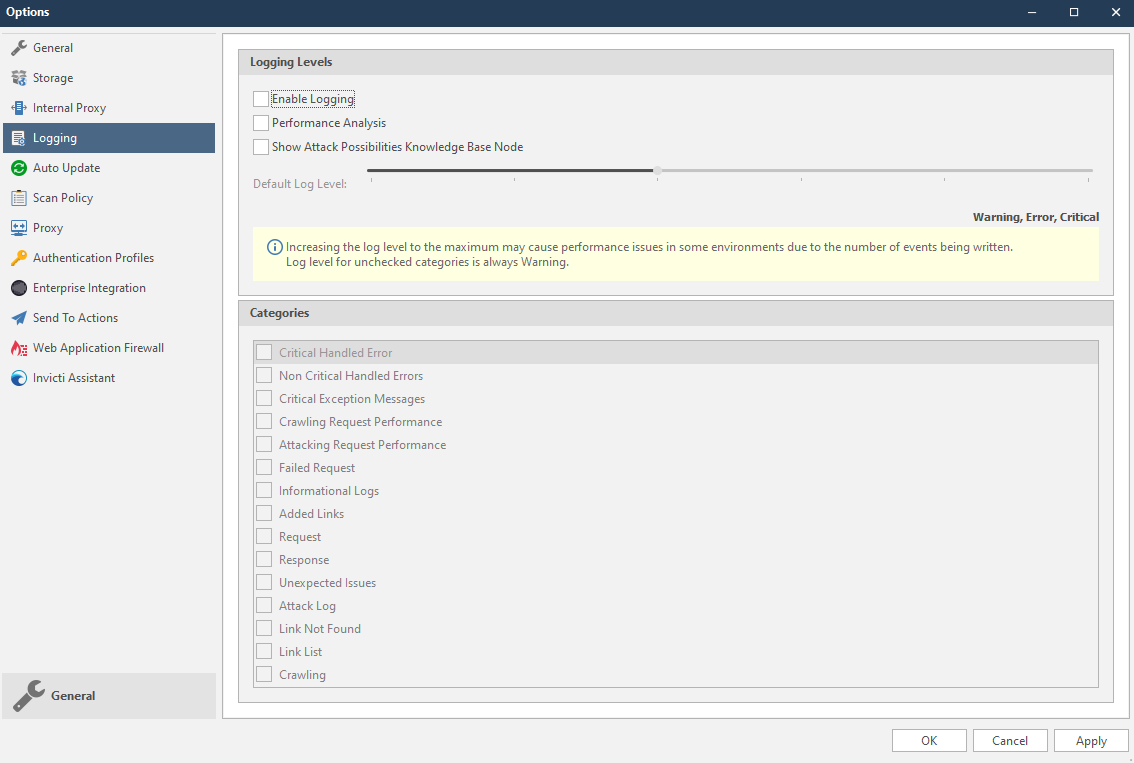

Increase the Logging Verbosity (Netsparker Desktop)

You can configure Netsparker Desktop to log everything that is happening during a scan from the Logging section in the scanner’s Options. You can launch the Options by pressing F4 or from the Tools drop-down menu.

Use the slider to configure the verbosity of the logs and check/enable the logging categories that apply, in this case, you can start with:

- failed requests

- added links

- request

- response

- link not found

- link list

- crawling

Once the scan is ready navigate to the My Documents\Netsparker\Scans\ directory, find the scan and open the scan log file named nstrace.csv.

Analyze the HTTP Requests and Responses

If you want to get a really detailed view of the scan you can watch the HTTP Request and Responses which are exchanged during a scan.

And If the Scanner Missed a Page

If Netsparker misses a page or parameters you can do the following:

- Report the issue to the support team if the problem seems to be a crawling issue,

- Manually crawl that section of the website before the scan,

- Import the requests to Netsparker when configuring the scan.